One hackathon. One big idea. A codebase full of untested code, and a hunch that AI could fix it. That’s what happens when you give Tinder engineers unstructured time and a problem to solve. There’s no such thing as business as usual here. There’s just the next thing worth building.

At Tinder, company-wide hackathons are a part of how we work, giving everyone the chance to innovate and create new fixes and features. This year’s hackathon had two tracks: one for product ideas and one for internal tooling and engineering improvements.

Our team came into the hackathon knowing what problem we wanted to solve: how to better prioritize test coverage, an important part of the process that can slide to the back of the to-do pile when time is short.

Test Coverage: A Problem and a Priority

Unit tests aren’t glamorous. They don’t ship features, they don’t move metrics, and in a world where roadmaps are packed and engineers are stretched thin, writing tests for existing code is the kind of work that can easily slide to the next sprint.

But that deferral comes with a compound cost. As we undertake large-scale efforts like modularization, rewrites, and major refactors, tests are a quick and critical way to ensure our logic behaves how we expect it to. Without tests, adding new features and refactoring existing ones become riskier as implicit behaviors and assumptions not codified in tests cannot be validated and could silently fail.

We know they are important, but we were up against two related constraints: 1) product work is core to what we do and will always take priority over test coverage and 2) simply put, there are not enough hours in the day for engineers to backfill years of untested code.

We needed a different approach. The hackathon gave us one.

The Hackathon: Undercover Agent Is Born

Our team knew our problem and we had a working hypothesis: that AI agents may be good enough at understanding code to write meaningful unit tests. Not just boilerplate stubs, but real tests that understand intent and improve systems.

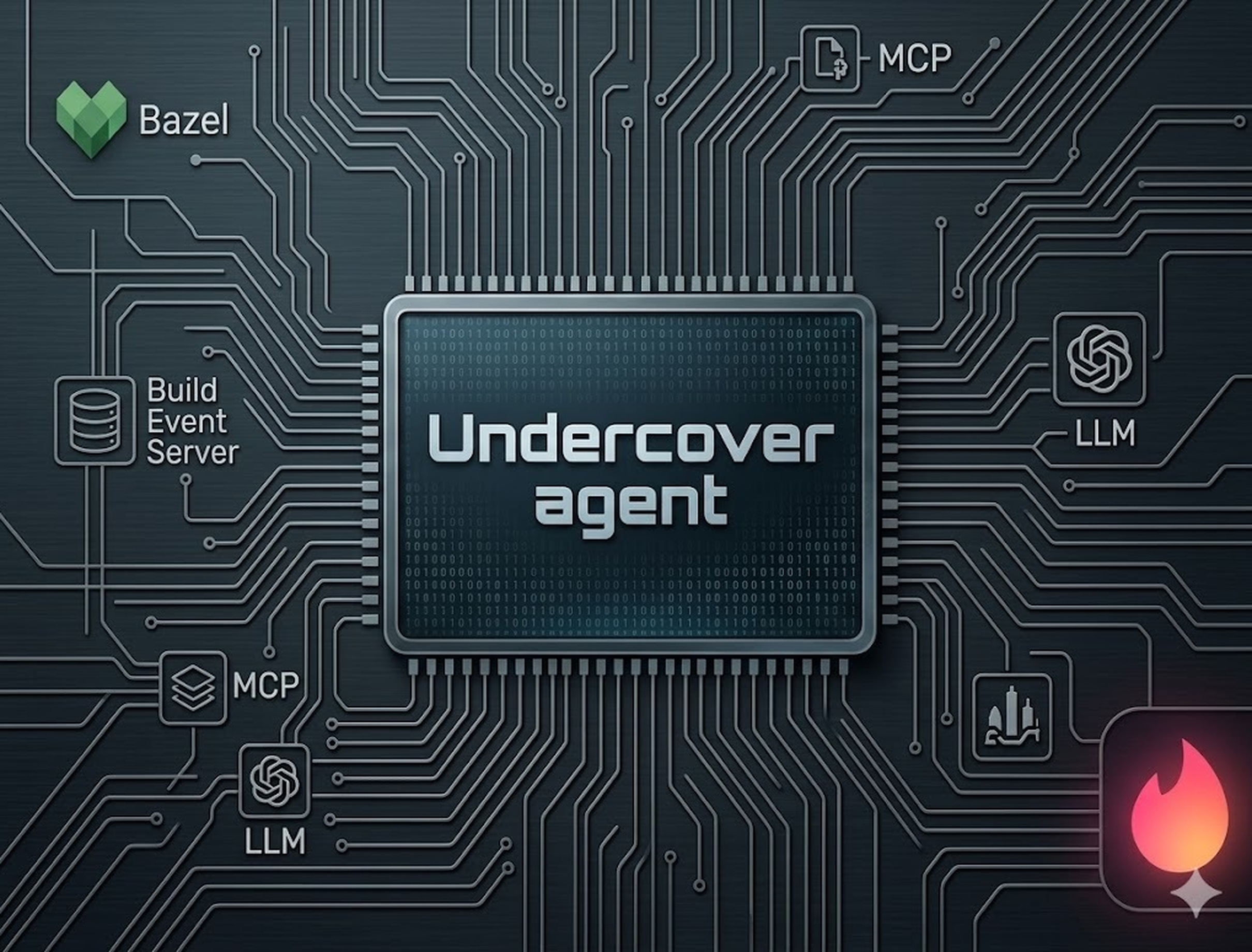

We called our entry Undercover Agent. The idea was straightforward: give an agent access to our test coverage data, and let it find the gaps and fill them. The build was a little more complicated.

Before an agent can write a test, it needs to know what’s already covered, information that lives in the build. At Tinder, we use Bazel for our iOS builds, and prior to the hackathon had already enabled a Build Event Server (BES), a Bazel technology that exposes all artifacts from a build to downstream consumers in real time.

This gave us a crucial piece of infrastructure. The BES can emit LCOV coverage data, a standard format for tracking which lines, branches, and functions are exercised by tests. The agent could, in principle, query this data and know exactly which parts of the codebase had no coverage at all. Now we just needed a way for the agent to be able to ask for it.

The Glue: Model Context Protocol

Model Context Protocol (MCP) has become the standard interface for connecting AI agents to external tools and data sources. We implemented an MCP server that spoke directly to the BES, which allowed the agent to:

- Query current test coverage for any given file or target

- Receive up-to-date LCOV data as the build progresses

- Iteratively request new coverage information as it added tests

That last point is essential. An agent writing tests needs a feedback loop: write the test, run the build, check if coverage is improved, repeat. Without the ability to observe the results of its own work, it’s flying blind.

Because we built the coverage interface as an MCP server, we got another added benefit: any agentic coding tool that supports MCP could use it out of the box.

Engaging the Agent: /cover-modified-files

We built it so that engineers can easily deploy the agent using a simple slash command:

/cover-modified-files

When engineers run this command, the agent will automatically analyze currently modified files, identify which functions and branches lack coverage, write tests to cover them, and verify that coverage actually went up.

To ensure it worked seamlessly, we built a small proof of concept during the hackathon to showcase that the agent could run entirely autonomously, with no human in the interactive loop at all. Of course, in practice, all changes proposed by the agent are reviewed and approved by engineers before merging, and historic guardrails are firmly in place to ensure the security of our source code. But the important thing is: it worked.

And we changed the way we work. The project won the engineering track of the hackathon and we got busy preparing to deploy it.

Undercover Agent at Work

Immediately after the hackathon, we integrated the autonomous agent into our CI pipeline, the first LLM-backed pipeline deployed on tinder_ios, which meant we were figuring out patterns that didn’t yet exist.

Running an autonomous agent overnight sounds simple enough until you think about what that means at scale. We had to solve for several competing concerns:

- Resource usage: the agent couldn’t dominate the CI queue. Other builds needed to run simultaneously.

- Throughput: if it moved too slowly, it wouldn’t cover enough ground to make a dent in the debt.

- Scheduling: we implemented day-of-week modulo scheduling to work through all of the targets systematically.

- PR orchestration: with 20 to 30 approved PRs in flight at any given time, we needed smart merging logic that understood when engineers were actively working on a file and backed off to avoid conflicts.

- Skills and guidelines: the agent needed to code like a Tinder engineer, so we wrote structured conventions covering testing frameworks, patterns, and mocks, turning tacit knowledge into something the agent could follow.

- Guardrails: operational guardrails ensure the agent does what it was designed to do and nothing more. Coverage must go up and tests must be stable. Anything that doesn't meet that bar gets thrown away entirely rather than submitted as a marginal PR.

The agent runs nightly and stops after creating 10 pull requests per run. That ceiling keeps it from flooding the queue while still making steady progress. And throughout all of it, humans stay in the loop. The agent proposes; engineers review and approve.

What We've Learned

We're still early in this deployment, but a few lessons have already become clear.

- MCP is a powerful abstraction for agentic pipelines. Connecting an agent to your build system via MCP means it can observe the effects of its own actions in real time. That feedback loop is what makes the difference between an agent that guesses and one that learns.

- Hermetic builds are critical for repeatable results. Because our Bazel build is hermetic, we could trust that local behavior would replicate in CI. Write once, works everywhere.

- Skills compound. Writing testing conventions for the agent clarified our own standards. As we expand agentic editing across the codebase, that foundation transfers.

What's Next

The pipeline is live and coverage is going up. But the patterns we've built (MCP-connected agents, hermetic build feedback loops, skill-based guidelines, and PR orchestration) are not specific to test coverage. They're a general architecture for autonomous code editing, one that could apply to lint fixes, dead code removal, documentation, or any high-volume task engineers don't have time for.

We built Undercover Agent to solve a problem every engineering team knows: great intentions, not enough time. Turns out, solving that problem opened up something much bigger.

The agent works while we sleep. Every morning, we wake up to a cleaner codebase.